Bridging Political Divides With Artificial Intelligence

Sociologist builds AI chatbot to help mediate heated political discussions

Q: In your new study, an AI chatbot serves as a communication intermediary between two people on different sides of an issue. The chatbot evaluates the language of a question and tweaks it to be constructive to the person receiving the question. What is the basic logic and hypotheses behind creating this experiment?

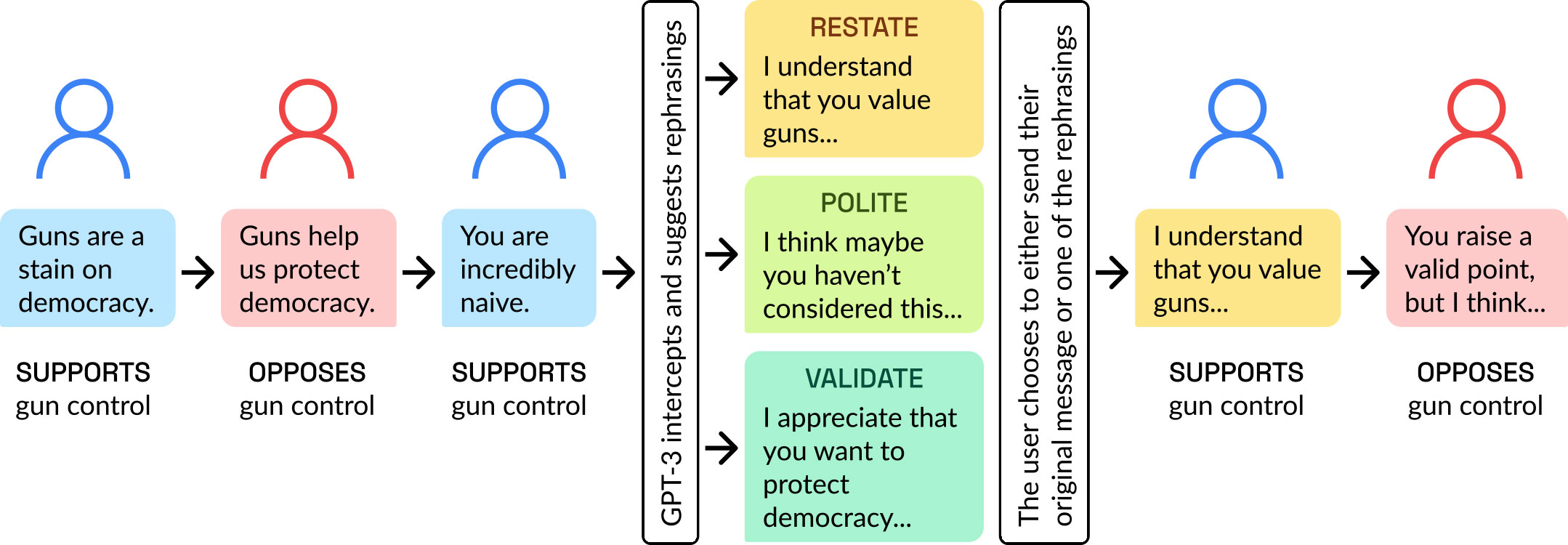

BAIL: Though debate on social media can seem like a lost cause, there is a large scientific literature that identifies the factors that produce more productive conversations about controversial topics, such as the one we studied (gun control). There are also many non-profit groups that work to implement these evidence-based strategies on the ground. But these interventions are just a drop in the bucket, since getting people who disagree to meet up in person – and recruiting a skilled mediator to facilitate their discussion – is very difficult. My co-authors and I wondered: What if AI could serve as a kind of conflict mediator that nudges people to implement the type of strategies a human facilitator might recommend—such as “active listening” or the practice of rehearsing someone else’s argument back to them to demonstrate that you have heard them.

Q: How might this device work in real digital life, outside the structure of a research project?

BAIL: We are still in early days with generative artificial intelligence, and much more research is needed before we can let tools such as GPT-4 run wild on our platforms. At the same time, many social media companies are currently exploring how to use such tools to decrease toxicity and perform content moderation—especially since many of the people who performed these tasks previously were recently laid off at large companies such as Facebook and Twitter. My co-authors and I want to ensure that such efforts are informed by scientific evidence—and we believe our work is a first step in this direction.

Q: What is the chatbot providing to the conversation that the two human participants are not providing themselves?

BAIL: Anyone who has been in an argument has trouble explaining why things got out of hand. Very often, there are simple explanations—people do not feel heard, they feel disrespected, or they feel like they are talking past the person they are arguing with. Arguments can escalate because of 1, 2 or all three of these reasons. Our intervention simply nudges people to use communicative tactics that make each of these outcomes less likely.

Q: In this role, is your chatbot corrective in nature? Meaning, are people generally inclined towards online nastiness or bias that needs correcting?

BAIL: Our chatbot was not designed to pull people back from the brink after they have already been arguing. Instead, it is designed to structure conversations so that their overall trajectory is more productive, less stressful, and is more likely to include a genuine exchange of ideas.

Q: It seems so much political conversation on social media is done in bad faith, using misinformation or deception, that people simply aren’t interested in an honest discussion. Is this a consideration for you?

BAIL: So many people are focused upon the dangers of generative AI on social media— and there are clear and present dangers related to misinformation. At the same time, very few people are focused on the other side of the coin: how can we use AI to nudge people in the other direction, perhaps before they become susceptible to misinformation or other polarizing behaviors. There has been so much focus on the dark side of generative AI, that we risk overlooking a major opportunity to leverage these powerful new tools to enable the braver angels of our nature.